On More Load Testing Tools

If you’re planning to develop a new load testing tool, here’s a message that’ll save you millions of dollars on R&D:

Load testing tools have become way too easy to use.

At least too easy for the problems we’re supposed to solve. I understand that the transition from one tool to another is a selling point and we’re supposedly spending most of our time on test development so it’s the obvious choice for optimization. It does, however, hide away the problems that would have emerged when developing the test the “hard” way.

I’ll give you an example:

Proxy recording and automated correlation. A great feature in itself- it simplifies and speeds up the development process. The tester just hits the record button, clicks through the user interface and it does all the work for them. After the recording, the tester is immediately gratified by green checkboxes. Green is good. It means you’ve done a good job. The test is done.

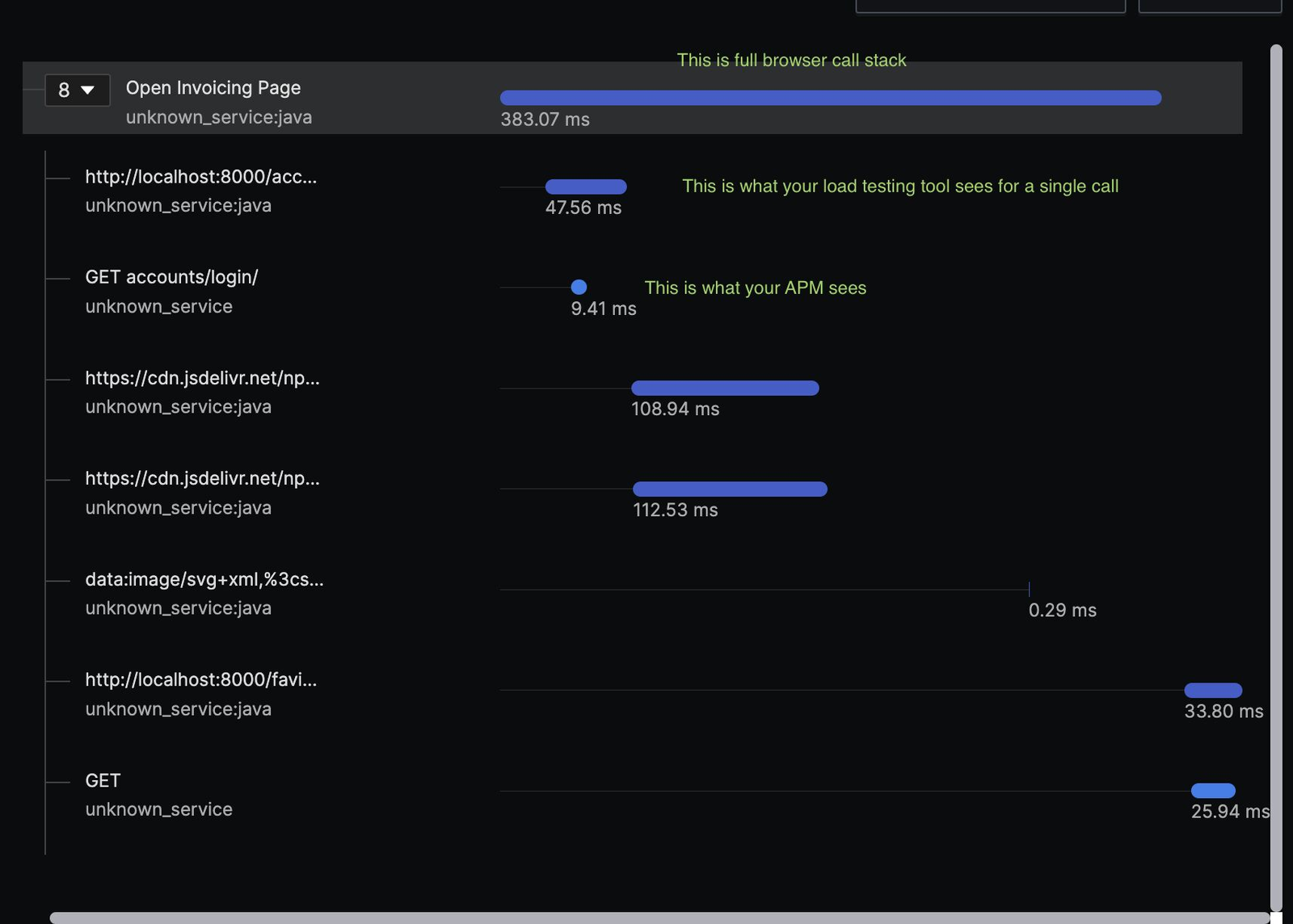

But… is it? A thorough review of the data returned by the application can already uncover some performance and efficiency problems. No payload compression, no caching, redundant or repetitive server calls for the same value… the list goes on and on. That’s a list of performance improvements you can identify without even running a single user test. And the risk is, problems like this won’t even show during your load test in a lab. A person who is only trained in proxy recording, won’t be able to capture these. Even after a series of testing.

Why am I bringing that up? The performance testing market is full of people only skilled in “tool X”. This often means, their goal is not to have an efficient and resilient application but a successful test run. At all cost. And I blame the load testing tools for focusing on the ease of use and not the actual impact they could make.

But also I could be asking too much and I have to accept that troubleshooting and improvements already belong to observability tools and I’ll start choosing the load test tools based on how well they integrate with the reporting tool I want.

Your thoughts?

Share this content:

Post Comment